Docker is a virtualization platform that offers a unique methodology for packaging and distributing applications, allowing for a significant streamlining in all aspects of the technology stack. From simplifying development to efficiency in production deployment, Docker positions itself as an indispensable tool for modern development teams.

Its strength lies in its ability to encapsulate an application and its dependencies in a virtual container, ensuring that it works uniformly in any environment. This eliminates the typical “works on my machine” problems, creating a solid bridge between development, testing, and operations teams. With Docker, companies can accelerate the software development lifecycle, improving not only collaboration between teams but also the scalability and security of applications. This comprehensive approach benefits both small startups and large corporations, adapting to multiple needs and technological scenarios.

Docker uses container technology to isolate and execute applications. Under the hood, Docker leverages Linux kernel features such as cgroups and namespaces to provide the necessary isolation. Cgroups (control groups) are used to limit and isolate the hardware resources (like CPU and memory) that a container can use, while namespaces provide the necessary isolation by creating a view of the operating system that is unique for each container. This allows each container to run its own application, libraries, and files independently of other containers.

Unlike Docker, virtual machines (VMs) operate by virtualizing the hardware to run different operating systems on the same physical machine. Each VM runs its own full operating system, which can result in significant resource usage and longer boot times.

In contrast, Docker is more resource-efficient. Since containers share the same operating system kernel of the host and only isolate the applications and their dependencies, they consume fewer resources than VMs and start up much more quickly. This efficiency makes Docker an attractive option for application deployment, especially in environments where resource density and efficient use are important. Unlike a full virtual machine, a Docker container does not need an independent operating system; instead, it shares the host operating system’s kernel and runs only those parts of the system that are truly necessary for its execution without the need to load all the libraries of a complete OS.

However, it’s important to note that Docker and VMs are not mutually exclusive and can be used together to take advantage of both approaches. While Docker provides a lightweight and efficient way to run multiple applications in isolation on the same hardware, virtual machines offer greater separation and isolation at the operating system level, which may be necessary for certain security requirements or resource management.

The versatility of Docker allows us not only to simulate closed environments but also to streamline the process of development and production deployment of our applications by providing a consistent and isolated environment. Developers can package all the necessary dependencies and configurations into a Docker container, ensuring that the application runs uniformly on any machine. In terms of administration and deployment, Docker offers significant advantages in terms of efficiency and scalability. The lightness of the containers allows for the deployment of multiple instances of an application quickly and efficiently in terms of resources, which is crucial in production environments.

Moreover, Docker integrates seamlessly with orchestration tools such as Kubernetes, which facilitates the management of large-scale workloads and deployment on server clusters. This efficiency translates into greater ease in scaling applications according to demand and in a reduction of infrastructure costs. Lastly, Docker also enhances security by providing isolation between applications, minimizing the risk of conflicts and shared vulnerabilities.

The advantages are numerous as we see, so let us clarify a series of basic concepts before continuing.

- Image: An image is like a template or a starting point for creating a container. It can be thought of as a package or a snapshot containing the operating system, software, applications, and all the configurations and dependencies necessary for an application to function. They are immutable, meaning that once created, they cannot be modified; each time you need to make changes, a new image is created. They are primarily used to create containers. When you run a container, Docker takes the corresponding image, deploys it, and uses it as a base to run the application.

- Container: It is an executable instance of an image. You can think of it as an isolated and lightweight environment where your application runs. They provide a consistent environment for your application to run. This means it will behave the same way, regardless of where it is run, whether on your local machine, in a test environment, or in production. They allow great scalability and flexibility, as you can run multiple containers on the same machine.

- Volume: A storage system used to persist data generated and used by containers. They are important because containers are ephemeral by nature, meaning that when they are deleted, all data created within the container is lost. Volumes solve this problem by providing a space where data can be stored and managed outside the container’s lifecycle. They are often used to store database information, configuration files, or any other type of information you need to maintain after the container has been stopped or deleted. This is crucial for applications that require data persistence.

With these basic Docker concepts in mind, we are now ready to dive into a practical example in which we will use an existing Docker image to run a simple application. This will help you better understand how Docker manages images and containers.

Before starting, it’s important to ensure that Docker is installed on your system. The Docker installation process on any operating system is quite straightforward, and you can find a detailed guide on Docker’s official website to follow the process step by step.

By opening the terminal of our system, we will run the run command to execute a container from an image

called hello-world which will greet us on the screen. As simple as:

docker run hello-world

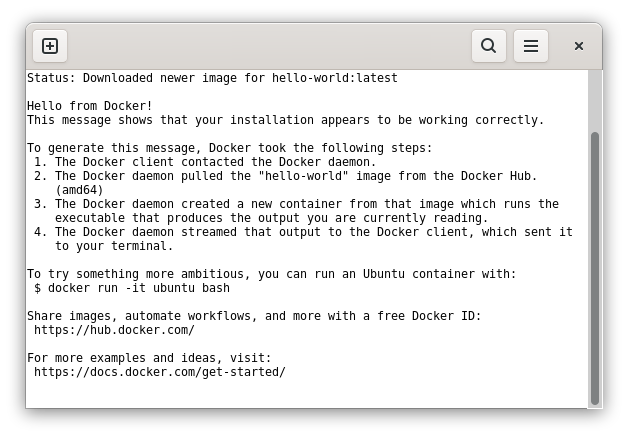

Its execution ends by explaining to us the process that Docker has followed to execute the application. Basically, it first communicates with the Docker daemon, a background process that manages tasks related to Docker containers. In this case, the daemon receives the instruction to run a container using the hello-world image.

Then, it proceeds to search for it and if it’s not found in your local system, it downloads it from Docker Hub, which is like a library or online repository of Docker images. Once the image is downloaded, the Docker daemon creates a new container based on it, a small box where your application runs. In this container, the program that generates the message you are reading runs.

Finally, it sends the output of the container, that is, the Hello from Docker! message, back to the Docker client, which is the one that displays the message in your terminal. This flow from the client to the daemon and back to the client is typical in Docker operations and is a good example of how Docker handles the tasks of running containers and managing images.

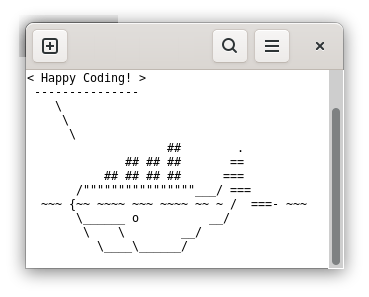

Some images allow sending parameters directly when running them from Docker, which opens a range of possibilities for customization and interaction. An example of this is the Docker whalesay image. This image allows users to send custom messages that are then displayed in the terminal through an ASCII figure of a whale, the friendly character that has become an emblem of Docker.

docker run docker/whalesay cowsay Happy Coding!

When you execute the command with the text you want after cowsay, the whalesay image will automatically download if it’s not already present in your system, as with all images. A container is created from this image and runs the * cowsay* program with the message you have provided. The result is a message displayed in your terminal accompanied by the image of a talking whale. This example not only demonstrates Docker’s ability to run applications in isolated containers but also illustrates how you can interact with these applications in a simple way.

In summary, Docker is an incredibly powerful and versatile tool that is revolutionizing the way we develop and deploy applications. Through practical examples with the hello-world and whalesay images, we have seen how Docker simplifies the process of running applications in isolated and consistent environments. These examples represent just the surface of what Docker can do, demonstrating its ease of use and how it can make software development and deployment more efficient and free from environment-related problems.

For a more practical example, you can run a basic Linux kernel using a minimalist image like Alpine Linux, known for its simplicity, efficiency, and small size, making it ideal for experimenting with Docker containers. To start, simply open your terminal and execute the following command:

docker run -it alpine /bin/sh

The -it option allows you to interact with the container through a shell session, in this case, using /bin/sh, a common command interpreter in Linux distributions.

Once executed, you can explore a basic Linux environment. You can try commands like ls to list files, apk to manage packages (Alpine’s package manager), or even install and run simple applications. If you want to know more Linux commands, our introductory article has a good list.

I encourage you to try Docker for yourself. Experiment with different images available on Docker Hub, play with creating your own containers, and observe how they can help you improve your workflow, but remember that they are lost when the execution ends. It is an essential tool for both developers and system administrators.

In our next article, we will delve into more advanced Docker concepts. We will also address other features and best practices that will help you make the most of Docker.

So, stay tuned and ready to dive into more advanced aspects of Docker! Whether you’re taking your first steps in the world of containers or looking to perfect your existing skills, Docker has something to offer for everyone. Happy Coding!